About

This article talks about Stream Processing vs Batch Processing.

The most important difference is that:

- in batch processing the size (cardinality) of the data to process is finite.

- The cardinality may be not known but will end at some time in the future,

- Meaning that each time a batch processing ask for a message, it will get one.

- whereas in a stream processing, it's infinite

- Meaning that each time a stream processing asks for a message, it will may not get any.

Do it once at night vs. do it continuously

Many organizations are building a hybrid model by combining the two approaches, and maintain a real-time layer and a batch layer.

- Data is first processed by a streaming data platform to extract real-time insights, and then persisted into a store

- Data can be transformed and loaded for a variety of batch processing use cases.

You may be also interested in the lambda architecture that takes advantage of the two models (data pattern)

Features Comparison

| Feature | Stream | Batch | Description |

|---|---|---|---|

| Scheduler Mode | Publish / Subscriber | Flow | In a batch mode, a workflow scheduler runs the processes whereas in a stream mode it's a publisher / scheduler one |

| Resources | |||

| CPU | Few | Intensive | The CPU is busy moving around old data most of the time. |

| IO | Few | A lot | In a batch architecture, the CPU is busy moving a lot of data whereas on a stream platform only the delta is taken into account |

| Performance | Quick | Slow | Stream means less data motion |

| Throughput Requirement | Low | High | batch processing (like MapReduce) often is used for processing large historical collections of data in a short period of time (e.g. query a month of data in ten minutes), whereas stream processing mostly needs to keep up with the steady-state flow of data (process 10 minutes worth of data in 10 minutes). |

| System Properties | |||

| Coupling / No dependency | Few | A lot | change is difficult, due to the shared data that introduce strong dependency. Dependency are external in the workflow vs dependency are internal in the process |

| Inconsistency | Only latency | Yes | If an ETL step breaks, the whole data set ends being inconsistent. That's why caches are always fact based and not table based. Ex: full dimension loading must be succesvol before a fact loading |

| Fault Tolerance | Yes | No | In an batch architecture, the workflow is dependent and fail when one of this step is failing. A stream orchestration can be resolved and restart over the day |

| Failure Management | |||

| Restart | Yes | No | In case of failure, the restart point is not well known whereas in a stream model, each process can be restarted (It must still be Idempotence) |

| Resources Management | |||

| CPU Load | Incremental | Not predicable | In an batch architecture, it's really difficult to forecast the future load as one big batch is enough to got the CPU high. Stream makes capacity planning (hardware prediction) easier. The weekly or monthly pick due to batch process (as financial invoices) are easily spotted. |

| Process must be prioritized | Yes | No | When the load becomes to high, choice must be made on a batch platform whereas on a streaming platform, you will just adjust cap the number of running process |

| Data | |||

| Scope | Rolling time window one record | dataset | Queries or processing over data |

| Size | Few / Potentially infinite | Large / Known | Individual records or micro batches consisting of a few records vs Large batches of data. |

| Analytics | Simple | Complex | Stream uses simple response functions, aggregates, and rolling metrics |

| .. | |||

Access Pattern Comparison

A database and a message queue, both store data but have different data structure and therefore different access pattern which means:

- different performance characteristics.

- different implementations

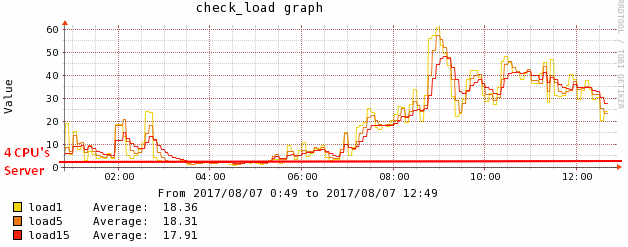

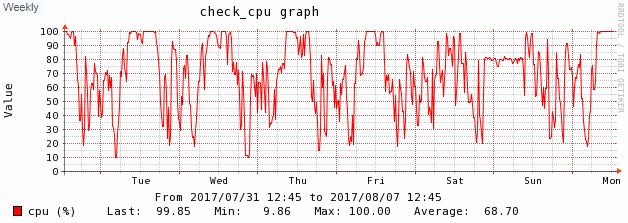

Typical Batch Orchestration CPU metrics

Below are some typical graphics that is showing the cpu load of a computer

- that runs only on batch processing.

- after a couple of year

The process adds up slowly every year until it can handle the load anymore.

You can see:

- the process running in the night (not steady but with variations)

- around 7am, when the users comes back and asks there morning reports, the computer got to 100% and can't handle it anymore.

This process is repeating every day of the week. You don't even need to see the day on the axis of the graph.

Every single week above 50% of load. Note that above this limit, the response is no more linear because there is a lot of wait between each process.

Note on ETL Tool and Real time

ETL tools were built to focus on connecting databases and the data warehouse in a batch fashion.

Early take on real-time ETL = Enterprise Application Integration (EAI)

EAI is a different class of data integration technology for connecting applications in real-time.

EAI employed Enterprise Service Buses and MQs weren’t scalable (Push method and index management done on server side)